Automation is a foundational pillar of digital transformation, but is it possible for on-premises ops teams to automate bare metal? Can ops teams automate bare metal infrastructure? This post will define a few cloud infrastructure terms and discuss how our client RackN does it.

Disclaimer: I consult for RackN, but I was not asked to write this post (or paid to do so), and it did not go through an editorial cycle with them. The following represents my own words and opinions.

Learn from developers

One of the good things that came from developers operating in public cloud environments is that they developed a plethora of tools and methodologies. Many dev teams are developing applications that need to take advantage of on-premises data.

These teams are finding that data gravity is a real thing and they want to have the application close to where the data is (or is being created) to take advantage of data latency or to conform to security and other compliance regulations. On-premises operations teams are being asked to build environments for these apps that look more like public cloud environments than traditional 3-tier environments.

As on-premises ops folks, we need to understand the terms developers use to describe their processes, and see how we can learn from them. Once we understand what their desired end state for their environments are, we can apply all of our on-premises discipline to architect, deploy, manage, and secure a cloud-like environment on-premises that meets their end goals.

Instead of fighting with developers, we have the chance to blend concepts hardened in the public cloud with hardened data center concepts to create that cloud-like environment our developers would like to experience in physical datacenters. But to make things work, you’ll need to automate your bare metal infrastructure.

Definitions

Before we get started, let’s set the stage with a shared understanding of the terms Infrastructure as Code, Continuous Integration, and the Continually Integrated Data Center.

Infrastructure as Code (IAC)

This is the Wikipedia definition of IaC:

The process of managing and provisioning computer data centers through machine-readable definition files, rather than physical hardware configuration or interactive configuration tools. The IT infrastructure managed by this comprises both physical equipment such as bare-metal servers as well as virtual machines and associated configuration resources.

Via Wikipedia

Automating data center components is nothing new, I wrote kickstart and jumpstart scripts almost 20 years ago. Even back then, this wasn’t a simple thing, it was a process. In addition to maintaining scripts written in an arcane language, change control was ridiculous. If any element was changed by something like an OS update or patch, changing hardware (memory, storage, etc.) or there were changes in the network, you’d have to tweak the kickstart scripts, and then test and test until you were able to get them to work properly again. And bless your heart if you had something like an OS update across different types of servers, with different firmware or anything else.

Cloud providers were able to take the idea of automating deployments to a new level because they control their infrastructure, and normalize it (something most on-premises environments don’t have the luxury of doing). And of course, the development team or SREs never see down to the bare metal, they look for a configuration template that will fit end state goals and start writing code.

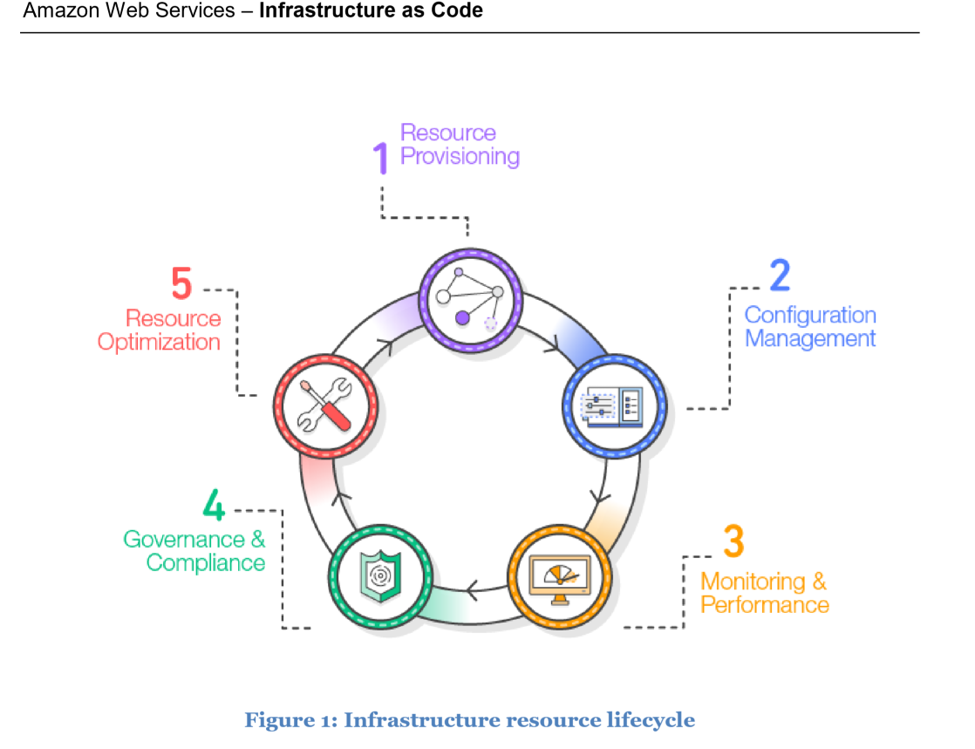

This AWS diagram from a 2017 document describes the process of IAC. Please note the 5 elements of the process of IaC:

There is an entire O’Reilly book written about IaC. The author (Kief Morris) defined IaC this way:

If our infrastructure is now software and data, manageable through an API, then this means we can bring tools and ways of working from software engineering and use them to manage our infrastructure. This is the essence of Infrastructure as Code.

via Infrastructure as Code website

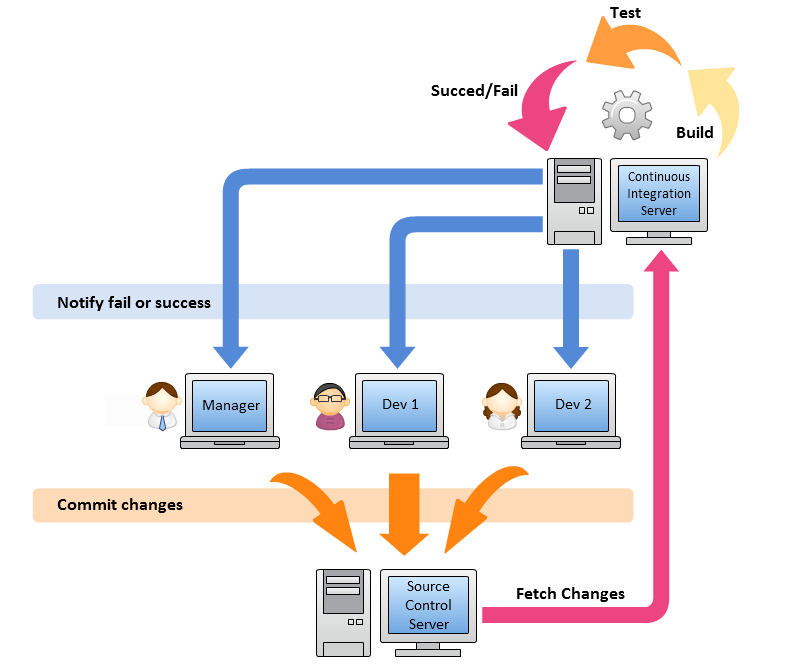

Continuous Integration

Another important term to understand is Continuous integration (CI). CI is a software development technique. Here is how Martin Fowler defines it:

Continuous Integration is a software development practice where members of a team integrate their work frequently, usually each person integrates at least daily – leading to multiple integrations per day. Each integration is verified by an automated build (including test) to detect integration errors as quickly as possible. Many teams find that this approach leads to significantly reduced integration problems and allows a team to develop cohesive software more rapidly. This article is a quick overview of Continuous Integration summarizing the technique and its current usage.

via martinfowler.com

If our infrastructures are now software and data, and we manage them via APIs, why shouldn’t on-premises ops teams adopt the lessons learned by software teams that use CI? Is there a way to continually integrate the changes to our infrastructure will absolutely require automatically, something that kickstart never really handled well? Is there a way to normalize any type of hardware or OS? What about day 1 and day 2 operations, things like changing passwords when admins leave, or rolling security certs?

Most importantly, is there a way to give developers the cloud-like environment they desire on-premises? Can developers work with on-premises ops teams to explain the desired end state so that the ops team can build this automation?

Continually Integrated Data Center – a New Methodology for On-Premises Ops Teams

RackN is a proponent of the Continually Integrated Data Center (CI DC). The idea behind CI DC is approaching data center management in a software CI approach, but down to the physical layer. RackN’s CEO Rob Hirschfeld explains it this way:

What if we look at our entire data center down to the silicon as a continuously integrated environment, where we can build the whole stack that we want, in a pipeline way, and then move it in a safe, reliable deployment pattern? We’re taking the concept of CI/CD but then moving it into the physical deployment of your infrastructure.”

To sum up, CI DC takes the principles from CI and IaC but pushes them into the bare metal infrastructure layer.

RackN Digital Rebar – a CI DC Tool for On-Premises Ops Teams

RackN’s goal is to change how datacenters are built, starting at the physical infrastructure layer, and automating things like raid/firmware/bios/oob management, OS system provisioning, no matter the vendor of any of these elements or the vendor of the hardware on which they are hosted.

Digital Rebar is deployed and managed by traditional on-premises ops teams. It is deployed on-premises, behind the firewall.

Digital Rebar is a lightweight digital service that runs on-premises behind the firewall and integrates deeply into a service infrastructure (DHCP, PXE, etc). It is able to manages *any* type of infrastructure, from a sophisticated enterprise server to a switch that can only be managed via APIs to a raspberry pi. It is a 100% API driven system and has the ability to provide multi-domain driven workflows.

Digital Rebar becomes the integration hub for all the infrastructure elements in your environment, from the bare metal layer up. Is the end state that has been requested to stand up and manage VMware VCF? RackN has workflows that help you build the physical infrastructure to VMware’s HCL, including hardening. Workflows are built of modular component that let you drive things to a final state. Since it is deployed on-premises, behind the firewall, it is air-gapable for high security environments.

What’s new in Digital Rebar v4.3

Here are the new features available in the 4.3 launch:

- Distributed Infrastructure as Code – delivering a modular catalog that manages infrastructure from firmware, operating systems and cluster configuration.

- Single API for distributed automation – providing both single pane-of-glass and regional views without compromising disconnected site autonomy.

- Continuously Integrated Data Center (CIDC) workflow – enabling consistent and repeatable processes that promote from dev to test and production

Real Talk

Not all compute will be in the cloud, but developers have new expectations of what their experience with the data center should be. Most devs write in languages written for the public cloud. Traditional data center platforms like VMware vSphere are even embracing cloud native tools like Kubernetes. All of this is proof we’re in the midst of the digital transformation everyone has been telling us about.

Sysadmins, IT admins, even vAdmins, this is not a bad thing! On-premises ops teams can learn from the dev disciplines such as IaC and CI, and we can apply all the lessons we know about data protection, sovereignty, etc. to use new ops processes such as CI DC. It’s long past time to adopt a new methodology for managing data centers. Get your learn on, and get ahead of the curve. Our skills are needed, we just need to keep evolving them.

One thought on “Can you automate bare metal infrastructure?”