AI succeeds or fails based on context, trust, and freedom. QlikConnect made that unmistakably clear.

Enterprises don’t lack AI ideas. What they lack is the context to guide action, the trust to justify automation, and freedom to adapt without introducing risk. This was the underlying message at this year’s QlikConnect conference. The vibe was pragmatic. They didn’t frame AI progress as more automation or smarter models. Nearly every major announcement assumed a simpler starting point: AI only performs as well as the trust, context, and freedom wrapped around it.

That framing showed up across analytics, data engineering, governance, services, and customer outcomes. This post unpacks what that actually means.

Context: Why AI fails without business meaning

Generic AI fluency is not the problem enterprises are trying to solve. The problem AI needs to act with situational awareness inside real workflows.

AI answers without enterprise context will never translate into business decisions. Many customers feel frustrated because AI produces outputs that are hard to trust, hard to explain, and disconnected from how the business actually runs.

LLMs do not infer context on their own. Instead, teams must supply it through governed analytics and domain definitions. Without that, AI may be impressive but will remain operationally shallow.

This is why context showed up so often at QlikConnect. Without shared business meaning, AI speeds up analysis without improving outcomes.

Trust: How Agentic AI Turns Weak Data Into Risk

Trust is a board‑level risk issue. As AI moves from answering questions to triggering actions, the cost of being wrong rises sharply. Therefore, automated decisions based on weak, stale, or misunderstood data create operational risk, compliance risk, and reputational risk in real time.

Agentic systems assume downstream consequences. Once agents begin recommending, predicting, or acting, trust can no longer be implied or assumed. It has to be inspectable, measurable, and operational.

Before taking action, teams need a clear way to answer one critical question:

Is this data good enough to rely on right now?

That question surfaced repeatedly at QlikConnect. They didn’t treat trust as a feeling or a governance checkbox. Instead, they treated as a signal. And without explicit trust mechanisms, agentic AI either stalls in pilot mode or fails under production pressure.

Freedom: Why AI Collapses Under Lock‑in and Rigidity

As AI becomes embedded deeper into enterprise workflows, architectural choice starts to matter more than model novelty. Data captivity quietly constrains what organizations can do, how quickly they can adapt, and how safely they can operate.

AI value degrades when organizations are forced into:

- Closed ecosystems that limit interoperability

- Brittle pipelines that break under change

- Single‑vendor dependency that restricts deployment options

These constraints collide directly with today’s operating reality. Data residency rules, sovereignty requirements, industry‑specific compliance, and regional deployment mandates are no longer edge cases, but architectural inputs.

Freedom is not about avoiding standards. It is about preserving the ability to adapt as models change, regulations evolve, and business priorities shift.

When AI cannot move, cannot integrate, or cannot operate under real constraints, its value erodes fast.

Where AI Context, Trust, and Freedom Showed Up in the QlikConnect Product Announcements

The context‑trust‑freedom framing was not a slogan. It showed up repeatedly in the products Qlik announced.

Agentic Analytics: Turning Context Into Action With Accountability

Agentic analytics was positioned less as “AI gets smarter” and more as AI becomes consequential. Qlik’s expansion across Qlik Answers, Discovery Agent, Predict Agent, Automate Agent, and MCP Server reinforced a simple idea: insight without execution is a dead end.

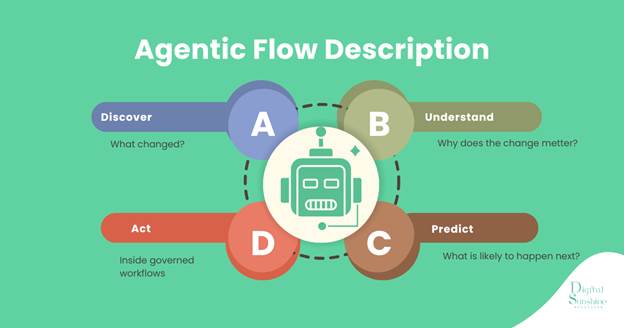

What stood out was not the number of agents, but the shape of the flow:

Most enterprise AI failures happen between those steps. Answers surface, decisions stall, and action never happens. Even worse, action happens without traceability.

Agentic analytics only work when business context is consistently shared, reasoning is explainable, and actions can be inspected after the fact. AT QlikConnect the team leaned hard into that reality instead of pretending autonomy is the goal.

Agentic Data Engineering: Making AI Context Usable at Scale

One of the quieter but important shifts this year was where agentic thinking showed up. It did not stop with analytics users. It moved into data engineering itself. The very active and (and vocal!) Qlik community pushed hard for this after last year’s conference.

Most organizations do not lack AI ambition. However, they may lack fresh, reliable, well‑governed data delivered at the speed the business now expects. Data teams carry this load, and pipeline work is often the most time-consuming parts of the AI lifecycle.

Declarative pipelines, real‑time routing, streaming, and agent‑assisted workflows are not luxuries. In fact, they are requirements for keeping data usable under constant change. By focusing on intent-driven pipeline creation and reducing manual assembly, Qlik positioned agentic execution as a way to reduce backlog without reducing control.

This is the difference between “AI helps me write code” and “AI helps my team ship reliable data faster.”

Where Do Humans Fit in the AI Context–Trust–Freedom Lifecycle?

The most important takeaway from QlikConnect was not about autonomy. It was about accountability. Nearly every announcement assumed the same thing: humans are not being removed from the loop. The loop is being redesigned around them.

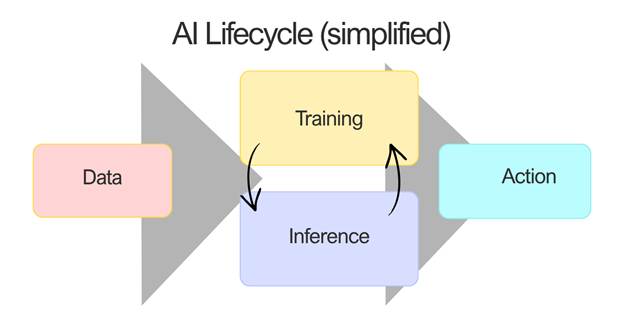

Where humans fit in the AI lifecycle.

At every stage of the AI lifecycle, humans determine value. It shouldn’t be human-in-the-loop as a control mechanism, but community-in-the-loop as an operating model.

An AI model will never stumble upon the solution to a problem. In fact, domain experts are who decide what signals matter, validate outputs, contextualize edge cases, and ultimately turn insight into action.

Research consistently shows better outcomes, fairness, and trust when experts remain actively engaged.

People new to a field can ramp faster with AI. But without guidance, error confidence grows faster than competence. Trust without understanding becomes a risk multiplier.

Consumers of AI still prefer human‑authored work, especially younger workers. Studies have shown that Gen Z trusts human‑only output over AI‑assisted work by more than 2:1. The pattern repeats: adoption without trust doesn’t scale.

What AI Context, Trust, and Freedom Actually Enable

Despite the technology on display, QlikConnect ultimately told a human story.

AI systems do not decide what matters. People do. AI does not define acceptable risk, navigate tradeoffs, or understand when conditions have changed. Organizations do. Every AI system encodes assumptions about how work happens, who is accountable, and what can safely be automated.

QlikConnect 2026 surfaced a clear pattern: AI only works when organizations design for context, trust, and freedom from the start. Context gives outputs meaning. Trust determines whether action is safe. Freedom determines whether systems can adapt without breaking when real‑world constraints appear.

When AI fails, it is rarely because the model was not capable. It is because the environment around it was not designed to support consequence. In that sense, AI is not replacing human performance. It is revealing how well, or poorly, it has been designed.

That is the real lesson. AI is not an intelligence shortcut. It is a force multiplier. And it rewards organizations that design for reality rather than novelty. And no one tells this story better than Qlik!

One thought on “Why AI Fails Without Context, Trust, & Freedom: QlikConnect 2026”

Comments are closed.